Yeah 3070 - 3080 somewhere is what I expect, AMD cites Navi20 improvements over Navi10 but it never really scales 1:1 but AMD’s doing a few things here.

The 6800 looks similar in hardware to the 5700XT but it’s RDNA2 and a good bit faster both base clock and potential boost clock speeds giving it two improvements here over the 5700

6900 has two variants I assume a XT and XTX again (Anniversary type variant bit better binned higher clock speed potentials.) speeds are a bit lower but it has a lot more cores over what the 5700 had in addition to RDNA2 and still somewhat higher speeds.

Flop comparisons get thrown around on how AMD only has ~20 something versus NVIDIA’s ~30+ something but then AMD’s gained over Navi10 whereas NVIDIA is a bit below Turing although this is also depending on the type of workload it performs and when it can leverage it’s full power it’s really fast.

(3090’s also power limited to a larger degree but custom designs or bios mods could remove that issue or hardware mods like shunts.)

Still never really going to scale perfectly but it’s not going to be another 30% from AMD either so the 6800 could push close to the 3060 or the 3070 with the 6900 closing the gap to the 3080 though this is all theoretical and we’ll see how the GPU scales and in which scenarios once actual info becomes available closer to launch.

5700 is still mostly scaling in Vulkan and D3D12 with D3D11 performance being very variable and D3D9 while now stabler is pretty poor compared to Vega or earlier.

5700 and newer now also has the hardware (Cache was redesigned.) to where AMD can leverage D3D11.1 multi-threading and potentially see gains like NVIDIA did but that’s a lot of work over a year or more that would be needed but that’s a performance challenge until then.

D3D12 and Vulkan would be dependent on developer utilizing AMD’s way of doing things and NVIDIA’s which either means both work or one shows more substantial gains there’s differences here for what either vendor recommends as best practices plus Vulkan also has extensions that make a difference.

(But there’s still several titles that don’t use Vulkan 1.2.x and thus this is limited by what 1.1.x has.)

What else? Well probably just the driver and software situation. AMD’s working out the RDNA1 issues but the regressions for Vega, Polaris and Fury are still there along with Radeon VII issues and RDNA2 is going to start this entire process over but hopefully be less of a mess for the … first year.

(That took way too long to get sorted and there’s still a variety of hardware level issues too.)

EDIT: Still needs actual confirmed info and more details though, lots of misinformation and rumors even with Linux and Mac driver code info now mostly confirming some of the details on this new hardware after all.

Theoretical gains and up to too until actual benchmark results and how well this additional hardware and clock speeds and the RDNA2 instruction set actually does scale.

EDIT: Though that’s true for NVIDIA and Ampere too and some games and game engines either hit a CPU limit or don’t scale well for other reasons.

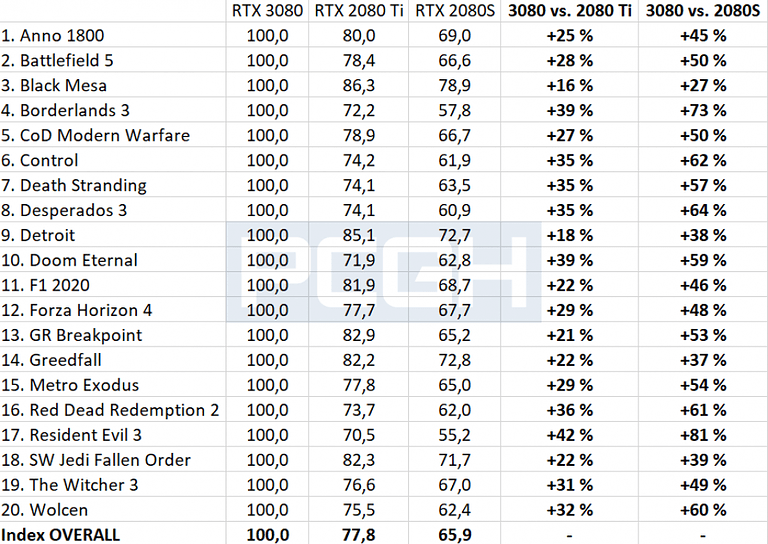

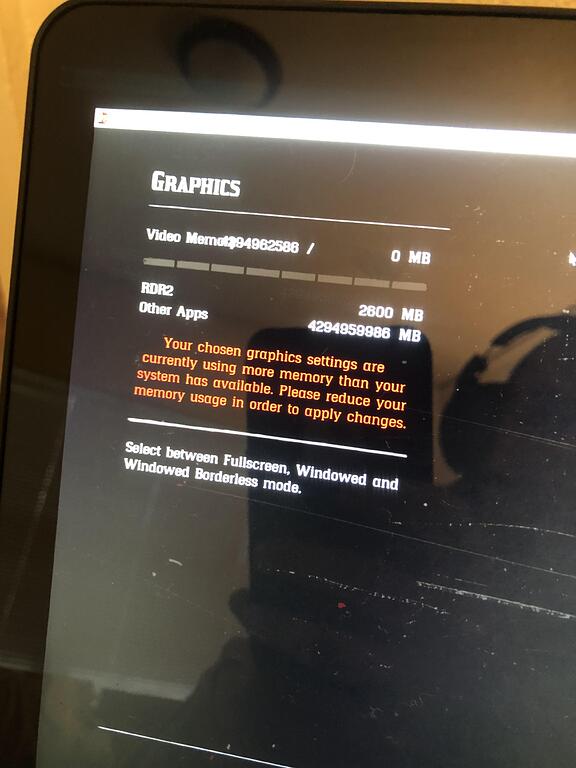

EDIT: Ah yes the 4 to 6 % performance lead here with the 2080 S well at least the Ti figures made it. ![]()

Still really fast though, bridging the gap between the current 5700 and 2080Ti performance wise would be needing almost a 40% performance gain or so and then an additional 30% to 40% on top of that to get close to competing with the 3080’s performance level.

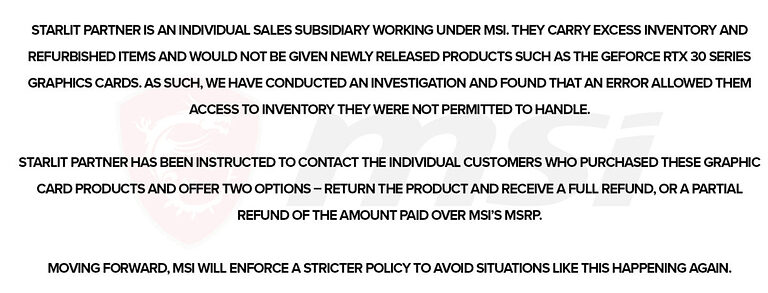

Competition between AMD and NVIDIA on the high end segment of the GPU market would be nice to see though, RDNA took a while but if RDNA2 and the next RDNA3 gaming oriented cards can start catching up or matching NVIDIA’s Ampere and upcoming Hopper cards this is going to get really interesting. ![]()